Is Nature Efficient?

Is nature efficient? The question might be provocative and also suggestive, if answered in the affirmative. Is there is some intelligence to nature, in other words, some planning agent that sets events up so that everything is just right, like a factory assembly line? Perhaps, and I do have my reasons for thinking the answer is yes, but I don’t believe that there’s a plan. More and more I see that the world as we see it is put together, or perhaps assembles, using a set of very basic rules. And, the rules of probability play a key role in guiding natural phenomena toward efficient paths. This view is not necessarily in opposition to the second law of thermodynamics and the idea of entropy, which would seem to gum up the works. Disorder, which entropy measures, is all around us yet nature generates phenomena that appear to model efficiency.

The classic example of efficiency is Fermat’s principle of least time in optics. Although the idea dates back to Euclid in regard to reflected rays from a flat mirror, Fermat was able to show how light always takes a path of least overall time while travelling between any two points. So it follows the most efficient path in this sense. In particular Fermat was able to show that even when a light ray passes from one medium to another, air to water perhaps, the refracted path is also the shortest.

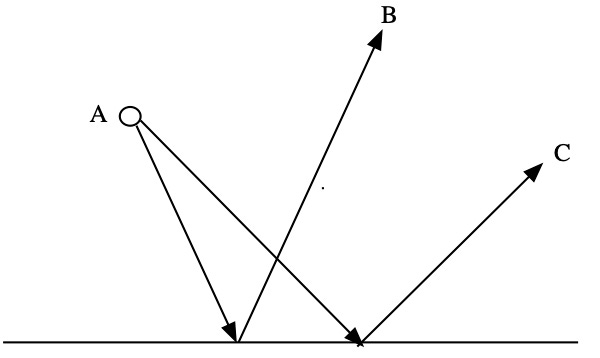

This should not lead you to conclude that light emerging from some source only follows one path, or there is only one ray. There are in fact an infinite number of rays leaving any source. But only one will reach a second point, which might be the position of your eye or a detector. In the diagram below several rays of light leave the source A, but only one of them both reflects off the mirror and reaches point B. That ray has followed two straight lines and the angle that the incident ray (A to mirror) makes with the mirror is the same angle made by the reflected ray (mirror to B). By convention the angles are measured relative to a line drawn perpendicular to the mirror surface, but the principle is the same. Other rays are incident on the mirror at different angles and reach other points, C for example in the diagram. And the incident and reflected angles in these cases are also equal.

Several rays emitted from source A are shown going to points B and C. The principle of least time states that each of these paths represent the least amount of time to go from A to each point, or the most efficient path.

It’s really more complicated than it seems, although the behavior can be encapsulated in the simple law: the angle of incidence=the angle of reflection. As a result of all this, when we look into a mirror with our displaced eyes we see the light reflected onto the mirror as if it all comes from a position behind and equidistant from the mirror surface. This point is the position of a so-called virtual image. Virtual, as none of the light actually penetrates the mirror surface and goes there.

Here’s a video I made a while back that shows you how to locate virtual images experimentally.

The video illustrates how many rays leave a source but their reflections all lead back to a virtual image behind the mirror. Think about this the next time you look at yourself to comb your hair. It can be shown that this pattern would only occur if the incident and reflected angles were equal and, following Fermat, the light followed the path of least time. The diagram above is not drawn to scale but if you follow the two reflected rays backwards through the mirror surface they converge at a point behind the mirror. In the video the convergence is demonstrated to occur at the same distance behind the mirror as the object is in front of it. This is not true for sideview mirrors on cars (“objects may be closer than they appear”) as those mirrors are curved, but it is true for flat bathroom mirrors. Otherwise it would be hard to comb your hair. In a flat bathroom mirror the bathroom is reproduced to scale in the virtual space.

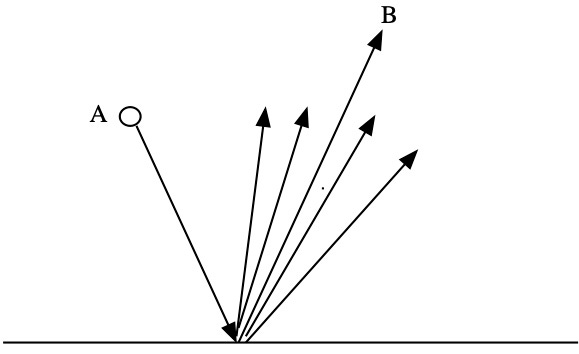

Really understanding how this happens requires some knowledge of how waves propagate and interfere, as light is a wave. Ray diagrams are only a way to represent wave behavior. In the figure below the ray that leaves the source at A and will reach B creates a ripple of waves on the surface of the mirror that can be represented as a fan of rays. Now imagine that these rays represent wave motions that will reach the vicinity of B. They don’t get there in exactly the minimal time, but if we imagine a graph of say time versus angle, then the minimum time must be at the bottom of a curve. On either side of that point the times are longer, but the curve is kind of flat there so the times for points near the minimum are almost the same as the minimum.

Wave fronts are spread out in space, so even though a ray misses point B the wave associated with it will pass over the point and interfere constructively with other waves that also almost get there in minimal time. They are all nearly in phase. Thus there is an accumulation of wave energy around the minimal time. It simply represents the most probable light path.

A ray leaving the source will produce a ripple as it reaches the mirror. The rays that nearly reach B in minimal time will coalesce around the net result that reaches B.

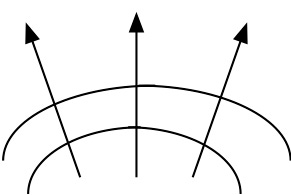

Rays are a way to represent wave fronts.

This is not unlike events that randomly coalesce around some probable outcome. It is no accident that when rolling two die seven is the most likely outcome as there are more ways to get to seven (6 and 1, 1 and 6, 5 and 2, 2 and 5, 4 and 3, 3 and 4), but there are almost as many ways to roll six and also to roll 8. The most likely outcome resides in a neighborhood of likely outcomes. This bunching of results occurs near a maximum, a peak in a curve if you will, that decreases on either side. The bunching of nearly equal time paths to point B occurs near a minimum, that increases on either side. That’s some of the logic. The bottom line is that the path of least time (or equal angles) is the most probable result.

One might say the same thing happens in evolutionary biology. Bear with me as I wander outside my field. At any point in time the natural environment is populated by species well adapted to their particular habitat or niche. This is even true if the species is threatened, as many are today, primarily due to destruction of that habitat by human civilization’s expansion. That they are still well adapted is a necessary outcome of evolution through natural selection. Over many generations a population is sifted like sand so that individuals with traits that increase their reproductive success tend to dominate the gene pool. These are the animals and plants we observe; almost all being well adapted. This process is also a matter of probability as individuals with environmentally friendly traits are simply more likely to survive and reproduce.

Perhaps my favorite author regarding evolution is the British biologist Richard Dawkins. In one of his many books he took on the “problem” posed by critics of evolution in regards to the development of eyes and the sense of sight. How could something so complex simply evolve. Dawkins argues that even if a very crude “eye” emerged through some mutation, that would be enough to get the ball rolling. This “eye” might at best be some element of an animal’s body that reacted to light and sent signals to the nervous system (which might include a brain), so calling it an eye might be a stretch as it might only be (say) one percent as effective as a human or animal eye. But one percent is better than nothing, argues Dawkins, and it could still help ward off predators or help in finding a mate. Evolution, over many generations, would favor individuals with this sense, and as time goes on favor even more individuals whose “sight” was better. After a time all individuals in a population would have eyes that worked much better than the one percent starting point. There would be a coalescence around this outcome whose probability grew with time (a long time, perhaps). Evolution’s critics argued that the eye was too complex to have just evolved. But repeated generational cycles will fix that even starting from crude, and accidental, beginnings.

The inspiration for this essay came from my re-reading of parts of a book written by the famous, and now late, physicist Richard Feynman called “QED”. The book was based on a series of lectures given by Feynman to explain quantum electrodynamics, the theory for which he and two others received the Nobel prize in physics, to the general public. I found it curious when I first read the book that Feynman spent so much time discussing the principle of least time in the context of reflection and mirrors, when his stated intention was to discuss quantum electrodynamics which is the quantum theory of electric and magnetic fields. But I think his intention was to demonstrate that what nature presents to us are those results biased towards the most likely, not the fixed and predetermined.

Feynman’s main contributions to QED were the mathematical techniques he developed that allowed certain types of calculations to be performed by representing them as sums consisting of an infinite number of terms with each term representing a different virtual process. So the interaction between two electrons could be thought of as a sum of increasingly complex processes. The more complex the process the less likely, the less statistical weight it had in the sum; a little bit like the various light rays that cluster around the observed rays that reflect off the surface of a mirror. In QED an actual event like two electrons approaching each other is viewed as a series of virtual events such as the exchange of a photon between the electrons, or a photon exchange that prompts the creation of a positron-electron pair etc. What is actually measured in an experiment, such as electron properties, depends on this weighted sum.

Feynman’s approach utilized the randomness and indeterminacy of the quantum world by imagining the range of possible events without being able to determine if any actually occurred. Any result derived in this manner is simply the most probable, like the coalescence of wave energy around the observed light ray, or the gene pool of a species. The idea of a minimum time or other efficiency measure is not immediately obvious in all of these cases unless you recognize that the least time idea in optics is equivalent to the most probable pattern drawn from a random sea of possibilities. I believe the idea has merit.